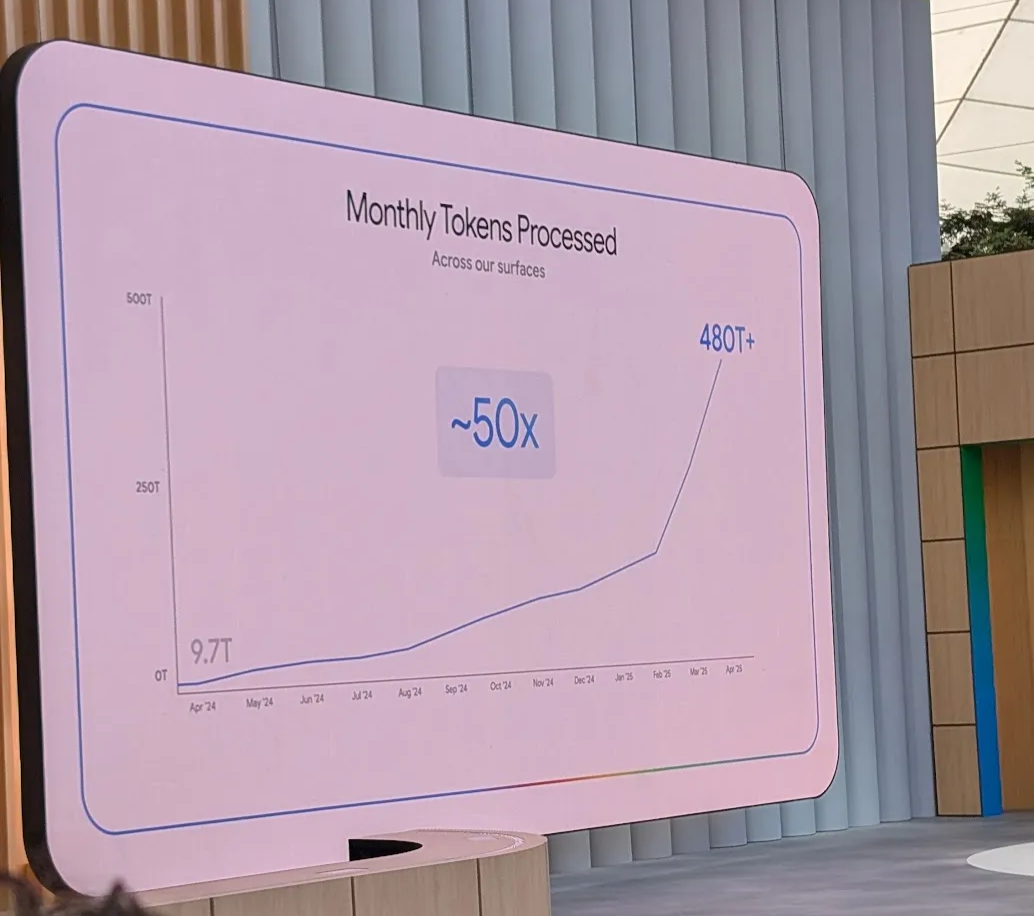

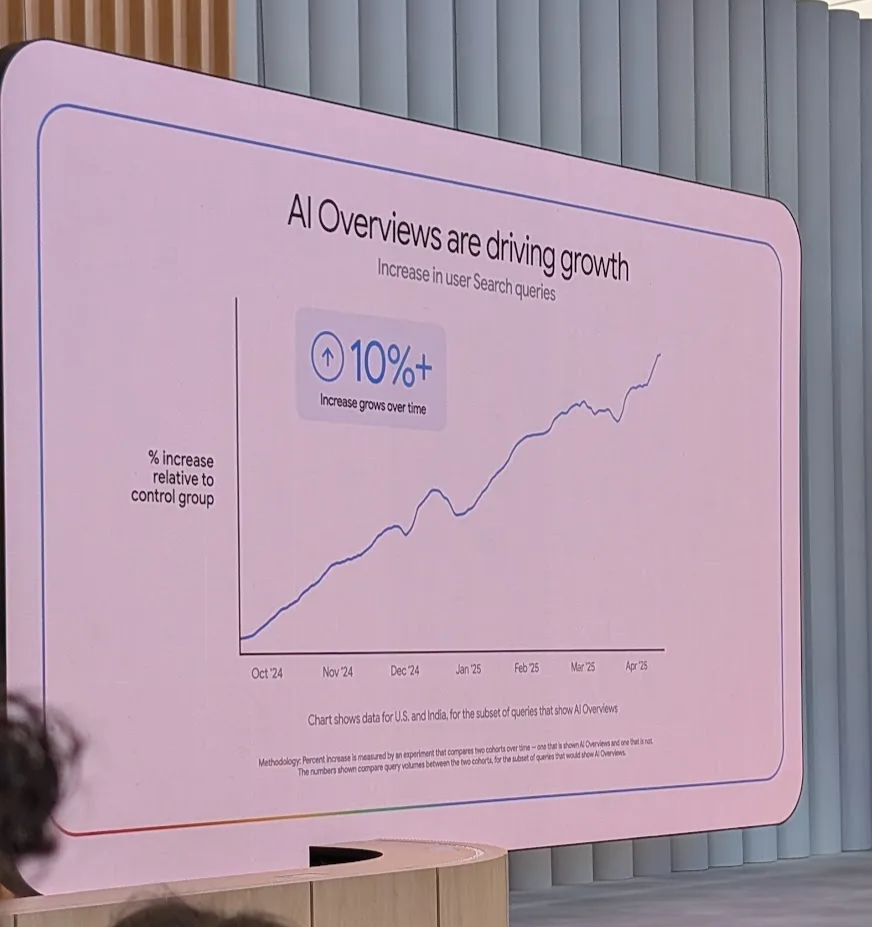

A few interesting numbers to start with that I felt were really powerful and speak volumes about the AI landscape in general.

9.7T monthly usage tokens to 480T per month - in just 1 year! This exponential growth demonstrates the rapid adoption of AI technologies.

AI Overviews are leading to 10% growth in user engagement. People are finding it useful and it’s leading to better satisfaction scores.

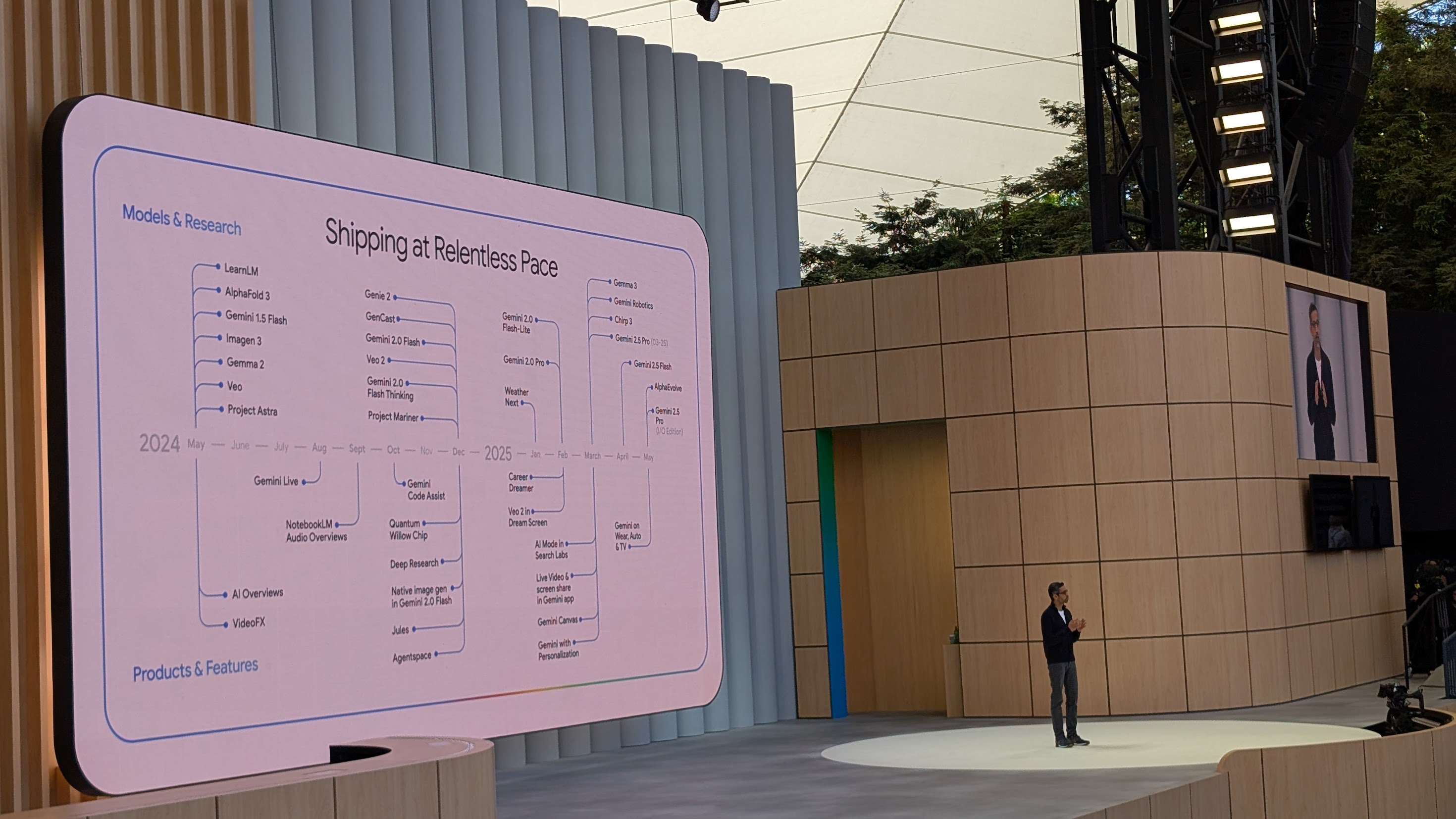

Gemini and Gemini API

The Gemini API is really getting feature-rich. The picture below kind of sums up how Google sees this, and it’s getting better all the time.

Key Features

URL Context - One of my favorite features announced and demoed in the latest release:

- Allows the API to get real-time information and answer questions based on a URL

- Opens up numerous use cases in building applications and architecting experiences

- Functions like Retrieval Augmented Generation (RAG), but dynamic and done at runtime

- Previously, when questions were about certain links, we had to scrape pages and provide context to the LLM, adding latency overhead and complexity

- Now it’s just a simple flag to enable

Google Search Grounding:

- May be a no-brainer, but it’s just a flag away in the API

- Enables Google Search integration (though much more expensive compared to Perplexity)

Live API:

- This has got to be the most exciting one for me

- Slick integration into the SDK

- Uses WebSockets for bidirectional data transfer

- Can build near real-time experiences

- Fastest way to prototype is in AI Studio - once you’re happy, you can copy the code and run it locally

- Voices are pretty cool

- Future support for function calling is announced for Live API, which is really exciting

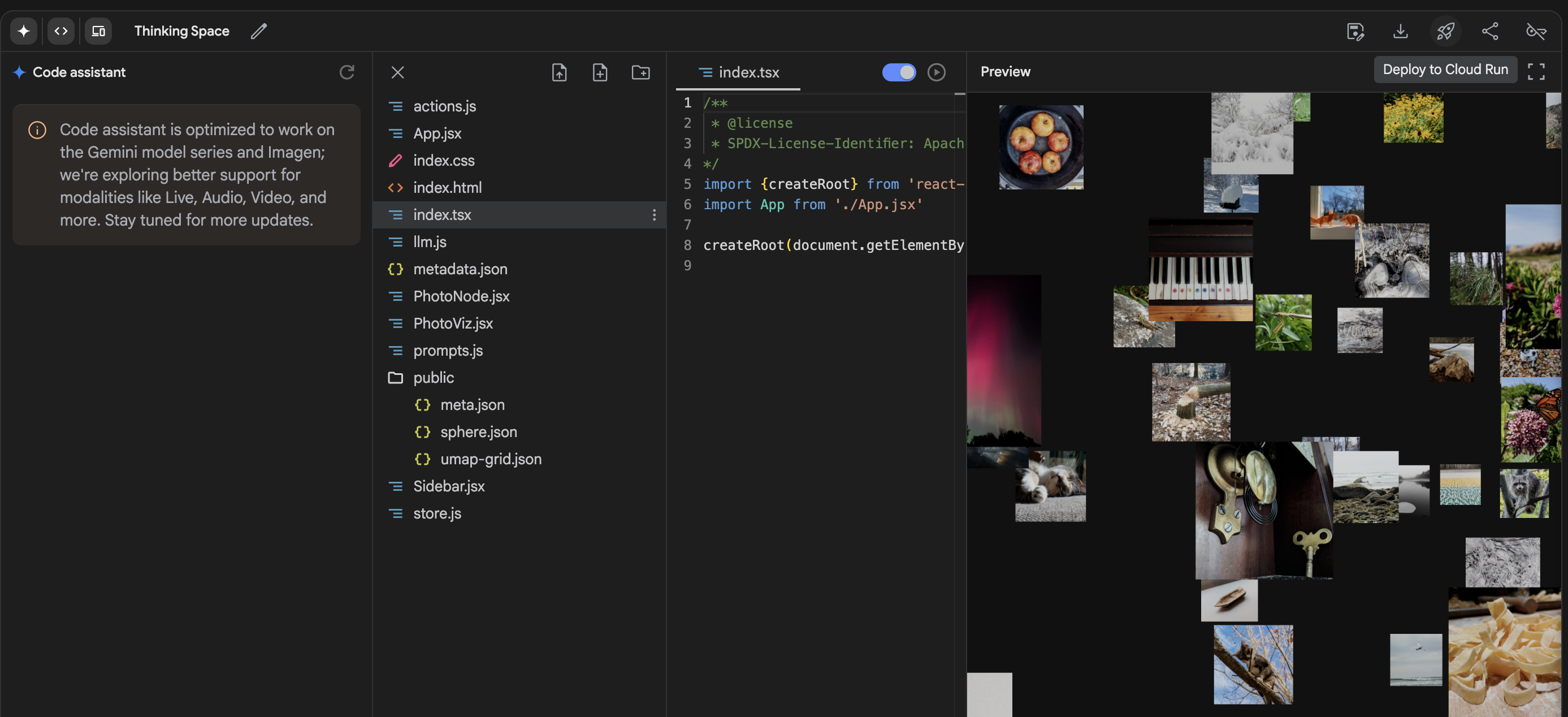

Google AI Studio

This has been my go-to place for creating applications based on the Gemini API. I’ve been an active user of AI Studio right since the day it launched, probably around 1.5 years ago.

For me, the active evolution of AI Studio is one of the biggest signs of how Google has been catering to developers and taking an iterative approach to Gemini launches. It transformed from a buggy application to one of the best developer experiences for using LLM APIs and building applications and prototyping.

Underappreciated Features

Developer Onboarding:

- No need for credit card - just sign up and get an API key

- I don’t think there’s any other model provider that does this

- Auto-creates a GCP account if one doesn’t already exist behind the scenes

- I still remember when I originally onboarded at launch - I had to manually set up GCP and link it to use the API (it was not fun). Now it just happens automagically!

Generous Free Tier:

- If using Gemini Flash, you can get a lot of value out of it

Sample Applications:

- The number of sample examples they have is awesome

- Really sparks the mind about different experiences that can be created with Gemini’s power

New Features Announced at AI Studio

This Google I/O had numerous improvements to an already awesome developer experience:

Full-Blown Code Editor:

- Complete development environment within AI Studio

Direct Deployment Option:

- Deploy applications co-created with Gemini directly to Google Cloud

- Remember, they automatically create a GCP account if you don’t have one

- This has huge implications and is a really good play to drive GCP adoption

- Making it this easy to deploy and share created apps is significant

- The difficulty in making a web app online and accessible for newcomers is quite high (other companies like Replit, Lovable, and other apps try to do the same)

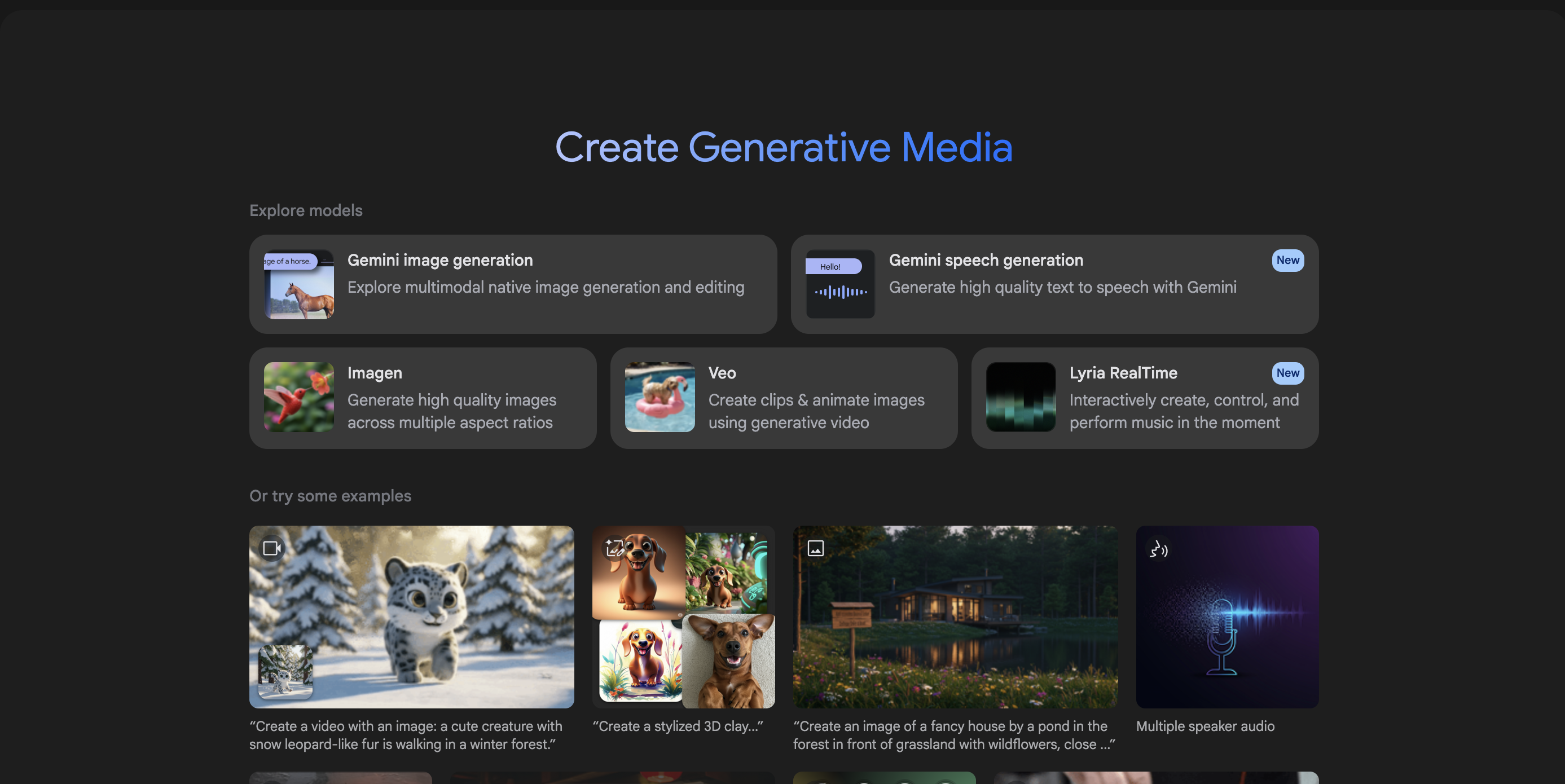

Integrated Media Generation:

- AI Studio now integrates other forms of Generative AI

- Can generate media with a separate “Generate Media” tab for building applications

Google’s Domain-Specific Applications

Film Collaboration

The collaboration with Darren Aronofsky to create a hybrid film with AI-generated content is pushing the boundaries of what’s possible when creating films using Gemini as a creative tool. The trailer was super cool. The movie was inspired by real-life incidents of Eliza McNitt (events that took place during her birth), who is also the director of the movie.

Behind-the-scenes video: https://youtu.be/4hzFi0V0xMU

Other Notable Projects

Just too many to describe in detail, but each of them are significant:

- Lyria2 and Music Sandbox in collaboration with Shankar Mahadevan

- DolphinGemma

- AlphaFold 3

- AI Co-scientist

- Veo3

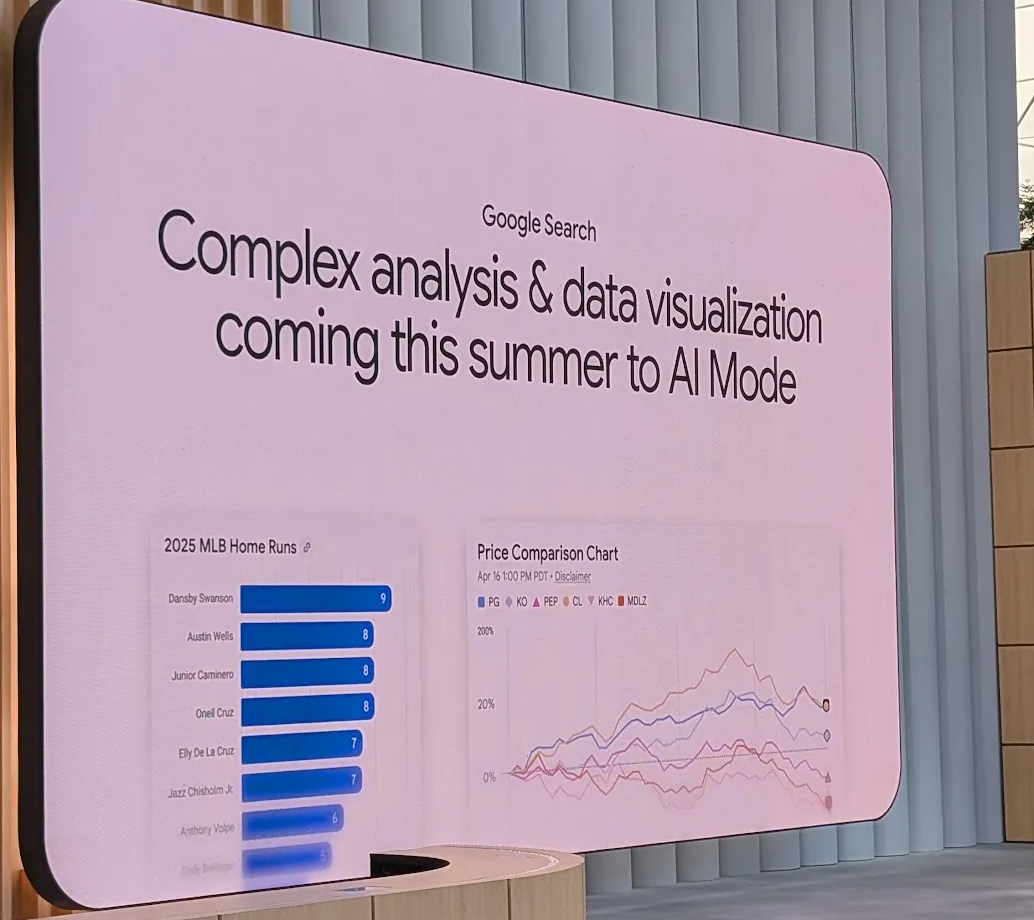

Search Evolution and AI Mode

Last year’s I/O: Released prototypes of Project Mariner, Veo, and Astra. This year: Several of these features are being integrated back into Search - a version of Astra, a version of Mariner baked back into search to book tickets on your behalf.

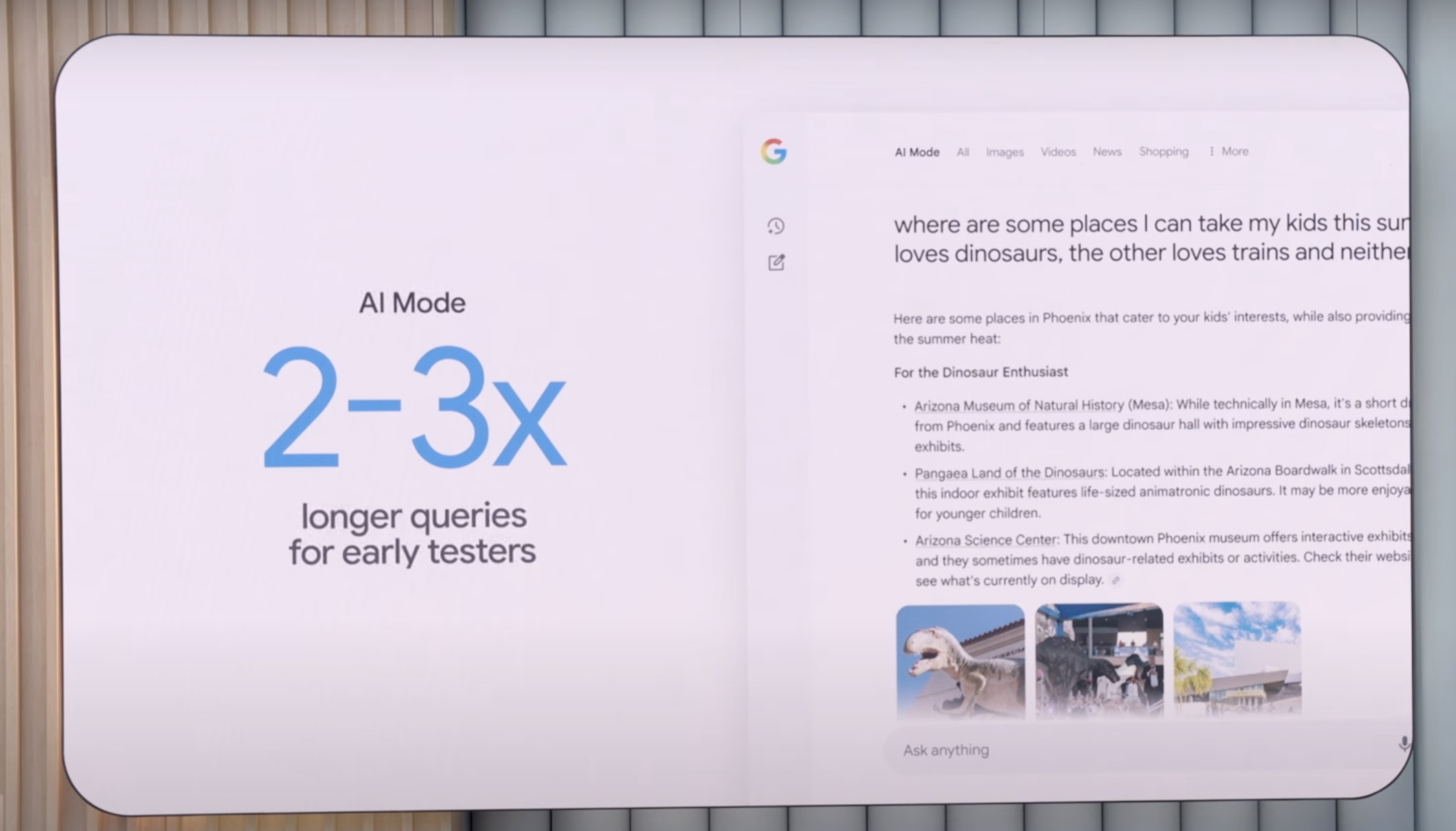

Search Query Patterns: The “ChatGPT effect” - queries are getting longer and more complex.

AI Mode incorporates many of the above features into the search experience.

Focus on Edge Devices

Chrome Advancements

Another Google play to make Gemini more relevant and the de facto model for client devices:

Browser API with Gemini Nano:

- Gemini Nano API integrated directly in browser

- Helps offload tasks to happen on client devices

- Allows architecting richer experiences

- Can result in reduced latency

- Enables hybrid application architectures

Gemma (Open Source Model)

This is my personal favorite model in OSS (Gemma 3) right now, so I was excited about this talk and wanted to understand what’s happening in this space.

All I can say is that they’re taking this model and OSS quite seriously because they need a lightweight model to deploy on client devices and to be the de facto model for people working on Android applications or Chrome-based development.

Performance Highlights

Just to put it into perspective of how good this model is compared to previous OSS:

- Successfully completed a complex visual understanding task requiring:

- Great instruction following about output requirements

- Spatial understanding of flowchart movement based on direction

- Domain-specific understanding that common OCR fails at

- Beats performance of the previous best open-source VLM model (Qwen 2 VL - 72B parameters)

- Gemma 3 is only 27B parameters - significantly outperforms with:

- 2.2x parameter efficiency

- 2-3x faster processing time

New Variant: Gemma3n

- Much more efficient to run on edge devices

- Shares some architecture from Gemini Nano

- Helps developers experiment with Gemini Nano

- Available as API on Android devices for on-device execution

- Native audio understanding capabilities

- Special focus on edge optimization (confirmed by model creators including Omar)

This is really huge as the Gemma model is truly great, and having it available on edge devices opens up countless possibilities for mobile and offline applications.

Summing It Up

For me this seems like Google is in its map era of map-reduce, where they are pursuing various verticals and having deep collaborations and partnerships to solve complex problems of leaders in those verticals - then bringing the advancements back into its core models (Gemini) and core business (Search).

Their goal would be to have a reduce phase, where all the lessons from solving frontier problems get baked back into their core applications and businesses. This very much feels like how NASA’s advancements in space have a trickling effect on everyday lives.

Having followed Google I/O pretty much over the years, and much more closely after the Gemini launch, this year’s Google I/O is quite a bullish indicator that Google has a solid roadmap and is on-track. The progress from last year’s prototype launches of Astra and Mariner to this year having those features baked into Search speaks for itself.

Lot of AI Labs such as Anthropic, OpenAI are pivoting to a more product-focused approach (proving that having the best model is no longer a moat) through launches such as:

This shows how models alone can’t drive growth and products that solve user problems is what would drive it.

“Distribution” is key in AI for growth and Google has the biggest distribution arguably.

It’s really fascinating to see and ponder how this all plays out, but Google is truly an AI giant. They have both horizontal and vertical integrations prime for AI:

- Hardware: TPUs (their own chips)

- Infrastructure: Their own cloud (GCP)

- Applications: Google Search, Google Lens to take advantage of AI

- Models: Their own SOTA models

- Platforms: Android for mobile devices and Chrome for all other devices

- Partnerships: Technical collaborations with various industries such as film, music, and new devices such as glasses

This truly feels like their battle to lose. I would be really surprised if that happens.

The convergence of all these elements - from the massive token usage growth (9.7T to 480T in just one year) to the seamless developer experience in AI Studio, from edge device optimization to domain-specific breakthroughs - paints a picture of a company that’s not just participating in the AI revolution, but actively orchestrating it across multiple fronts.

The real test will be execution at scale, but if this I/O is any indication, Google seems to have learned from their earlier AI missteps and is now moving with both ambition and precision. The next 12-24 months will be crucial in determining whether this integrated approach pays off, but the foundation they’ve laid is undeniably impressive.